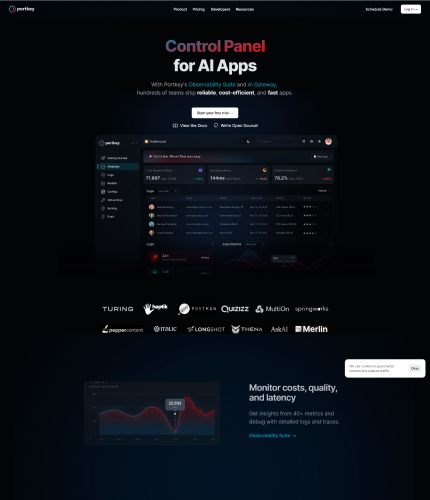

With the help of the LMOps platform Portkey.ai, businesses can create, release, manage, and improve their generative AI features and apps more quickly. It provides a full-stack operations platform that boosts AI application performance and development.

Businesses can replace their current OpenAI or other provider APIs with integration with the Portkey endpoint, enabling smooth interaction with their own applications.For managing models, including prompts, engines, parameters, and versions, Portkey.ai offers a single location.

Users may now confidently swap, test, and upgrade models with ease. In order to manage use and API costs, the platform also provides live logs and analytics, allowing users to track app performance and user-level aggregate metrics.Modern privacy architectures are used in the construction of the Portkey.ai platform because data privacy is a top priority.

The organization is presently pursuing ISO:27001, SOC2, and GDPR certifications to guarantee the confidentiality and security of data. In order to reduce downtime, Portkey.ai also upholds stringent uptime service level agreements (SLAs) and provides proactive alerts in the event of any concerns.By addressing the issues that come up while deploying language model apps to production, Portkey.ai seeks to make the deployment and maintenance of sizable language model APIs in applications easier.

Portkey.ai guarantees that incorporating the platform won’t slow down the user’s application and might even enhance the overall app experience with its benchmarked performance and intelligent caching features.

Visit Website