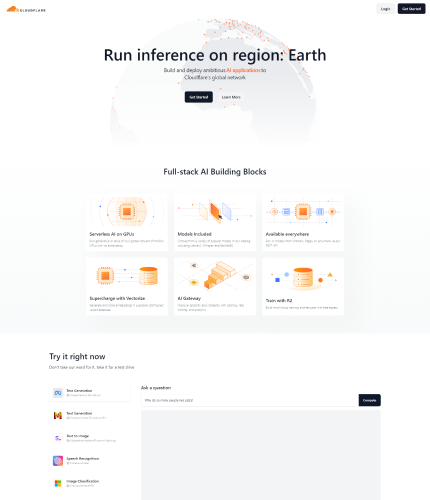

A solution called Cloudflare + AI enables customers to execute quick, low-latency inference jobs on machine learning models that have already been trained natively on Cloudflare Workers.

It delivers worldwide availability and scalability through the development and deployment of ambitious AI applications over Cloudflare’s global network.

Full-stack AI building pieces including serverless AI on GPUs, a selection of well-liked models, and the capacity to run AI models from Workers, Pages, or anyplace else via their REST API are all included in the solution.

Furthermore, through their AI Gateway, Cloudflare + AI provides capabilities like caching, rate limiting, and analytics to improve scalability and reliability.

Additionally, it offers the ability to create and save embeddings in a globally distributed vector database using Vectorize, which makes it possible to do effective searches on top of user data for machine learning models to be used repeatedly.

The tool features a curated database of off-the-shelf models and allows users to select templates with an emphasis on simplicity of use and speedy deployment. It can be used for tasks including text production, sentiment analysis, speech recognition, picture categorization, and translation.

With just a few lines of code, users may use Workers AI and Vectorize to do AI inference tasks on Pages, their preferred frameworks, or any stack via an API.Prominent AI firms including Meta, Nvidia, Microsoft, Hugging Face, and Databricks rely on Cloudflare + AI.

It seeks to prevent unexpected expenses by assisting customers in creating dependable, safe, and economical AI infrastructures. In addition, the tool provides AI-generated assets and training models with inexpensive storage with R2, opening the door to reasonably priced multi-cloud architectures for training big language models.

Visit Website