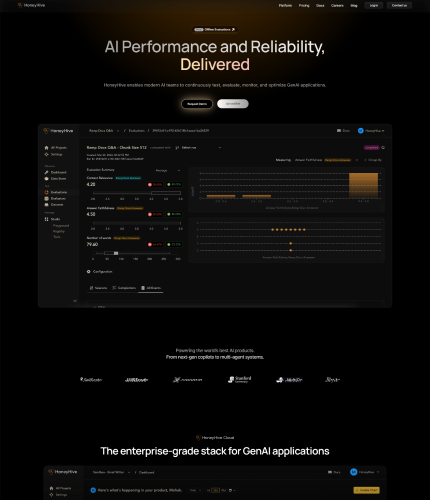

Language and Learning Models (LLMs) may be safely deployed and constantly improved in production with the help of teams using HoneyHive, an AI developer platform.

It has several features that are compatible with any model, framework, or setting.Mission-critical monitoring and assessment capabilities are integrated into the platform to guarantee the effectiveness and caliber of LLM agents.

Teams may also confidently roll out LLM-powered goods to their users thanks to it. Developers can use monitoring features for observability and analytics, and they can access evaluation test suites for offline evaluation.

Project managers and subject matter experts can collaborate on quick engineering in a version-controlled workspace with the help of the platform’s collaborative prompt engineering solution.Furthermore, HoneyHive uses AI-assisted root cause analysis to make complex chains, agents, and RAG pipeline debugging easier.

It offers a model registry, version control system, assessment metrics, and guardrails. Data scientists can monitor trials and evaluate results using this platform, which gives application teams self-serve data and insights.With its seamless integration capabilities, HoneyHive can be used with any LLM stack and supports any model, framework, or third-party plugin.

It takes a pipeline-centric stance and is designed for agents, complex chains, and retrieval pipelines in particular. Notably, queries are not proxied through HoneyHive’s servers thanks to their non-intrusive SDK.HoneyHive provides end-to-end encryption, role-based access restrictions, and data protection measures with an emphasis on enterprise-grade security and scale.

Secure data ownership can be ensured by deploying the platform on either a company’s Virtual Private Cloud (VPC) or the HoneyHive Cloud. Users can get help from founder-led support and committed customer success managers (CSMs) around-the-clock at any point in their AI development process.

Visit Website